Fairness Benchmarking Tool

Overview

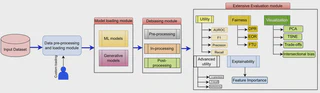

FairX is a comprehensive open-source Python-based benchmarking tool designed to enable systematic evaluation of machine learning models across three dimensions: fairness, utility, and explainability (XAI). As AI systems increasingly impact high-stakes decisions, ensuring models are not only accurate but also fair and interpretable has become essential. FairX provides a unified framework for researchers and practitioners to rigorously assess and compare bias-mitigation techniques.

Unlike existing fairness toolkits, FairX uniquely supports the evaluation of fair generative models and synthetic data generation, filling an important gap in the fairness evaluation ecosystem. The tool enables comprehensive benchmarking of both predictive and generative models, supporting both tabular and image datasets.

Motivation

The rapid deployment of machine learning models in sensitive domains like healthcare, criminal justice, and lending has exposed serious fairness concerns. While numerous bias-mitigation techniques have been proposed, systematic evaluation and comparison remains challenging due to:

- Fragmented tooling: Existing tools focus on either fairness OR utility OR explainability, but rarely integrate all three

- Limited generative model support: Most fairness tools only evaluate predictive models, neglecting the growing importance of synthetic data generation

- Inconsistent evaluation: Lack of standardized evaluation protocols makes comparing different bias-mitigation approaches difficult

- Complexity barriers: Implementing and evaluating multiple fairness techniques requires significant engineering effort

FairX addresses these challenges by providing an integrated platform where researchers can train, evaluate, and compare various fairness interventions using consistent metrics and protocols.

Key Features

1. Comprehensive Model Library

FairX includes implementations of state-of-the-art bias-mitigation techniques across the entire ML pipeline:

- Pre-processing methods: Data reweighting, resampling, and fair representation learning

- In-processing methods: Fairness-constrained optimization, adversarial debiasing

- Post-processing methods: Threshold optimization, calibration techniques

- Fair generative models: Fair GANs, VAEs, and diffusion models for synthetic data generation

2. Rich Evaluation Metrics

Fairness Metrics:

- Group fairness: Demographic parity, equalized odds, equal opportunity

- Intersectional fairness: Multi-attribute fairness metrics

Utility Metrics:

- Classification: Accuracy, precision, recall, F1-score, AUC-ROC

- Synthetic data quality: Fidelity, diversity, authenticity

Explainability:

- Feature importance analysis

- Individual prediction explanations (SHAP, LIME)

3. Synthetic Data Evaluation

A unique capability of FairX is comprehensive evaluation of synthetic data generated by fair generative models:

- Statistical similarity: Distribution matching, correlation preservation

- Predictive performance: Downstream task accuracy, train-on-synthetic-test-on-real (TSTR) evaluation

- Fairness preservation: Verification that fairness properties transfer from synthetic to real data

4. Flexible and Extensible

- Custom datasets: Easy integration of user-provided datasets

- Custom metrics: Extensible framework for adding new fairness/utility metrics

- Custom models: Support for user-defined bias-mitigation techniques

- Multiple data modalities: Tabular and image data support

Technical Implementation

Language: Python 3.x

Key Dependencies:

- scikit-learn, PyTorch/TensorFlow for model training

- AIF360, Fairlearn for fairness metrics

- SHAP, LIME for explainability

- NumPy, Pandas for data processing

Architecture:

- Modular design with separate components for data loading, model training, evaluation, and visualization

- Pipeline-based workflow for reproducible experiments

- Batch evaluation support for comparing multiple models/configurations

Use Cases

FairX is designed for:

- Researchers: Benchmarking new fairness techniques against state-of-the-art baselines

- Practitioners: Evaluating fairness of production ML systems

- Policymakers: Auditing AI systems for compliance with fairness regulations

Impact

FairX democratizes access to fairness evaluation by:

- Reducing the engineering barrier to fairness research

- Standardizing evaluation protocols across the community

- Enabling systematic comparison of fairness techniques

- Supporting the emerging field of fair synthetic data generation

- Providing transparency through comprehensive explainability tools

The tool is publicly available on GitHub and has been designed with ease of use and extensibility in mind, making advanced fairness evaluation accessible to a broad audience.

Repository

Related Publications

Representative Synthetic Data for Fair Decision Making

Md Fahim Sikder

2025

Deep generative models and representation learning techniques have become essential to modern machine learning, enabling both the creation of synthetic data and the extraction of meaningful features …

FairX: A comprehensive benchmarking tool for model analysis using fairness, utility, and eXplainability

Md Fahim Sikder, Resmi Ramachandranpillai, Daniel de Leng, Fredrik Heintz

AEQUITAS, co-located with ECAI 2024 – 2024

We present FairX, an open-source Python-based benchmarking tool designed for the comprehensive analysis of models under the umbrella of fairness, utility, and eXplainability (XAI). FairX enables users …